Why innovation drives hard rock and metal's power

- Travis B

- May 5

- 10 min read

There’s a stubborn myth out there that heavy music is purely raw, unfiltered emotion, that it comes from some primal place untouched by technology or craft. We get why people believe that. When you hear a wall of distorted guitars and a vocalist screaming from somewhere deep in their gut, it feels ancient and instinctive. But the truth is that music production in metal has always been inseparable from the tools used to create it. From the first fuzz pedal to AI-assisted video production, innovation and emotional power have always moved together in hard rock and heavy metal.

Table of Contents

Key Takeaways

Point | Details |

Tech drives genre evolution | Electric guitars and effects transformed hard rock and metal’s sound and expressive power. |

Production shapes storytelling | Modern digital tools let artists choose between raw authenticity and powerful sonic polish. |

AI sparks controversy | AI-generated music expands creative possibilities but challenges the emotional authenticity fans value. |

Innovation deepens emotion | Technology enhances lyric-driven experiences, safeguarding personal impact while expanding the genre’s reach. |

Soul survives through change | Despite fears, metal and hard rock continually renew their emotional depth by adapting to innovation. |

How innovation shaped the sound of hard rock and metal

If you strip away the technology, hard rock and metal simply don’t exist. That’s not an opinion, it’s a fact built into the genre’s DNA. The electric guitar pickup, patented as US2089171 in 1937, was the first major domino. It converted string vibrations into an electrical signal, making amplification possible. Then came US2741146 in 1956, a patent that helped define how pickups captured inharmonic overtones, those buzzing, complex harmonics that give a cranked guitar its characteristic growl.

Amplification didn’t just make music louder. It changed what music could say. You could sustain a note until it fed back into something that sounded like agony or ecstasy. You could stack distortion until the sound resembled a chainsaw or a thunderstorm. Technological innovations like electric guitar pickups, fuzz pedals, distortion effects, and amplification were crucial in defining the heavy sound of hard rock and heavy metal, enabling louder volumes, richer timbres, and expressive “noise” that became core to the genres’ aesthetics.

Here’s how that evolution looked across decades:

Era | Key innovation | Impact on sound |

1950s | Humbucker pickup | Warmer, thicker tone with less noise |

1960s | Fuzz pedal | First deliberate distortion as expression |

1970s | High-gain amplifiers | Defined classic metal crunch |

1980s | Digital effects processors | Added depth, reverb, and dimension |

1990s | DAW recording consoles | Precision editing and layering |

2010s onward | AI and sample tech | New hybrids and production possibilities |

Key breakthroughs that built the genre we love:

Fuzz pedals created deliberate distortion that felt emotional and dangerous

Wah pedals gave guitars a vocal, expressive quality

Multi-track recording allowed layering of guitar tones that no single instrument could produce alone

High-gain amplifiers like the Marshall stack became synonymous with heaviness

Digital audio workstations (DAWs) put studio-level production in the hands of independent artists

Pro Tip: If you want to understand why a particular guitar tone hits differently, study the signal chain. From pickup type to amp model to effects chain, each decision shapes how an emotion lands when it reaches your ears.

“The electric guitar didn’t just change music. It gave anger, grief, and rebellion a frequency they could live in.” This is something we at Winter Agony have felt every time we plugged in since 2005.

The music video evolution in metal followed a similar path, with visual storytelling expanding alongside audio innovation. Technology didn’t replace the raw feeling. It gave that feeling more ways to hit harder.

Production methodologies: Naturalistic vs. hyperreal

Once artists could record and produce music with precision, a real philosophical split opened up. Two distinct schools of thought emerged in metal production, and they’re still debating each other today.

Naturalistic production is about capturing what happens in a room. It prioritizes the energy of a real performance, the slight timing variations between musicians, the breathing space in a mix, and the feeling that you’re hearing human beings making music together. Bands recorded this way sound like they could tear up your living room if they set up in it.

Hyperreal production takes a different view. It asks: why accept the limitations of a live performance if technology lets us exceed them? This approach uses precise editing, pitch correction, sample reinforcement, and layering that can push a mix to 50, 75, even 100-plus tracks simultaneously. The HiMMP study findings show that modern metal production methodologies contrast these two schools, both pushing “heaviness” beyond what any live performance could achieve.

A real-world example of the tension between these approaches is the multi-producer mixing experiment around tracks like “In Solitude,” where different producers handed the same raw recordings and came back with dramatically different results. Some mixes felt warm and lived-in. Others sounded like a machine designed specifically to shake the walls.

Comparing the two approaches:

Factor | Naturalistic | Hyperreal |

Track count | 15 to 30 typical | 50 to 100-plus |

Drum sound | Live room bleed preserved | Samples replace or reinforce |

Guitar tone | Amp recorded naturally | Re-amped, blended, layered |

Emotional feel | Human, raw, immediate | Punishing, precise, massive |

Best suited for | Storytelling and dynamics | Pure sonic impact |

Here’s why this matters for you as a listener:

The choice of production approach shapes how lyrics land. A naturalistic mix puts the vocalist in the room with you. A hyperreal mix makes the vocals feel like they’re inside the music itself.

Dynamics are everything in emotional storytelling. Naturalistic mixes preserve the quiet moments that make the loud moments hit harder.

Hyperreal production can make a small band sound like a force of nature, which opens doors for independent artists who lack expensive studio resources.

Neither approach is inherently better. The best producers, and the best bands, understand when to use each.

Pro Tip: When you’re listening to a metal record, pay attention to what happens in the quietest moments. How the producer handles those spaces tells you almost everything about their philosophy.

“We’ve worked in both worlds. Sometimes a raw, slightly imperfect take carries more truth than a perfectly edited one. That imperfection is where the story lives.”

Exploring production innovations in hard rock reveals how deeply these choices reflect an artist’s values, not just their gear. And learning AI video tips for metal shows how this same debate is now playing out in visual production too.

AI and the new frontier: Opportunities and controversies

This is where things get genuinely complicated, and honestly, it’s a conversation we think about a lot as a band navigating this moment in music history.

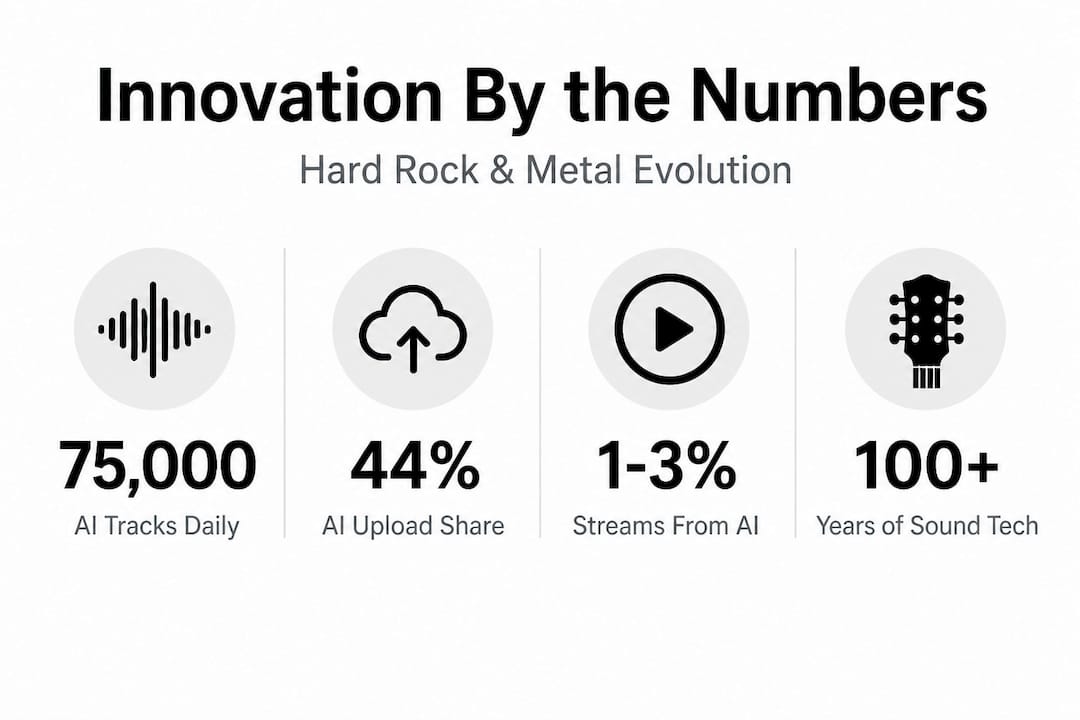

The numbers are striking. Deezer reports 75,000 AI-generated tracks uploaded daily, making up roughly 44% of all uploads to major platforms. Yet these tracks account for only 1 to 3% of actual streams. People are creating AI music at an enormous scale, but listeners are still choosing human-made music when it comes to what they actually want to hear repeatedly. That’s telling.

There’s also a darker side. Studies suggest that up to 85% of fraudulent streaming uploads involve AI-generated content, which hurts real artists financially and pollutes the ecosystem everyone shares.

The community is genuinely divided:

Pro-AI voices point to accessibility, the ability for a musician without a full band or budget to create layered, produced tracks quickly. Platforms like Soundverse are building entire ecosystems around this capability.

Anti-AI voices, including respected metal figures like Michael Kiske and Max Cavalera, argue that AI-generated music lacks something fundamental. It doesn’t come from experience, pain, or the years of practice that give music its weight.

The pragmatic middle (where we’d honestly place ourselves) sees AI as a tool with legitimate uses, especially for visual content and production assistance, without wanting it to replace the human at the center of the song.

AI in music | Opportunity | Challenge |

Track generation | Fast production for independent artists | Lacks authentic human narrative |

Vocal processing | New performance possibilities | Risk of losing natural emotion |

Music video creation | High quality visuals at low cost | Authenticity questions from fans |

Streaming distribution | More music reaches more ears | Flooding platforms with low-effort content |

Pro Tip: If you’re using AI music tools for rock production, treat AI as a collaborator for specific tasks, video visuals, mixing assistance, vocal layering, rather than the source of the song’s emotional core. Keep the human story central.

Staying current with AI trends in music is important right now because the landscape is shifting fast. What feels experimental today might be standard practice in two years.

Innovation amplifies emotional storytelling in lyrics

Here’s the part that doesn’t get talked about enough. When people worry that technology threatens the emotional truth in metal lyrics, they’re missing something important about how the two things interact.

Think about what amplification does to a vocal performance. Innovation in music, especially hard rock and heavy metal, drives evolution by embracing technology from amps to AI to amplify heaviness and storytelling. But the concern from purists is real too: technology risks diluting the human emotion that makes lyrics personally impactful.

Both things can be true. The key is intention.

When Kage from Winter Agony writes lyrics about real experiences, about struggle, survival, and the weight of carrying certain memories, the production choices around those lyrics either honor that truth or undercut it. A vocal run through the right chain of processing doesn’t hide emotion. It projects it. It takes something personal and makes it land inside another person’s chest.

Ways technology deepens lyric-driven storytelling:

Reverb and space create an atmospheric context that matches the emotional weight of words

Distortion on vocals can express anger or pain more viscerally than any clean tone

Layered harmonies add depth to confessional lyrics, like multiple voices confirming the same truth

Dynamic mixing lets a whispered line carry as much weight as a scream

AI-assisted video production creates visuals that extend the lyric’s world beyond what any budget-limited shoot could achieve

“Every piece of gear we’ve ever used, every new technique we’ve learned, has been in service of saying something true. The tools don’t make the story. The tools let more people feel it.”

Pro Tip: When writing with technology in your corner, always start with the emotional core of the lyric before you touch any effects or processing. The tech should serve the story, never replace it.

Studying emotional storytelling in music shows you how the most impactful metal songs balance technical craft with naked vulnerability. And exploring songwriting emotion in hard rock gives you a deeper understanding of how the best writers in the genre keep their humanity front and center no matter what tools they use.

Why the soul of metal survives through innovation

Here’s our honest, slightly contrarian take on all of this: the fear that technology will kill the soul of heavy music assumes that soul is fragile. It’s not. Metal has absorbed every major technological shift in music history and come out louder, heavier, and more emotionally complex on the other side.

Figures like Michael Kiske have spoken out strongly against AI music in 2026, and we respect that position. Contrasting views in the metal community show that pro-AI voices point to accessibility and new creative hybrids, while anti-AI voices from Kiske, Cavalera, and others argue the music lacks soul. Metal history, though, repeatedly shows tech integration without full replacement of human expression.

When distortion was new, people said it was noise, not music. When digital production replaced tape, traditionalists mourned the warmth of analog. When Pro Tools put a full studio in a laptop, some said it would create soulless, perfect records. Instead, it gave artists in Kentucky, in rural towns with no access to expensive studios, the ability to make real records and tell their real stories. That’s exactly what we did.

The soul of metal doesn’t live in the tools. It lives in why you pick them up. A band writing from real experience, from years of friendship, pain, loss, and resilience, brings that to every track regardless of whether it was tracked on tape or generated with AI assistance for the visual side of the release. The emotional truth travels through whatever medium carries it.

What we believe, after years of making this music, is that fans are the final filter. They can tell the difference between something made to fill a quota and something made because someone had to say it or they’d break. That instinct in listeners doesn’t go away because AI exists. If anything, authentic music becomes more valuable in a world flooded with generated content.

Learning more about music’s role in fans’ lives makes clear that the connection people feel to heavy music is deeply personal, tied to specific moments, specific losses, specific victories. No algorithm creates that. Artists who use technology as a means of expression rather than a replacement for it will always find their people.

Discover your next innovation-driven music experience

We’ve covered a lot of ground here, from the first guitar pickup to AI-generated video production, from naturalistic mixing philosophies to the emotional truth that lives inside a well-placed reverb tail. All of it points in the same direction: innovation and authentic storytelling aren’t opposites in hard rock and metal. They’ve always been partners.

If you want to keep going deeper on these ideas, our site is the place to do it. We’ve built a space where music technology, personal storytelling, and the hard rock and metal community come together. Explore music innovation through articles, band updates, production insights, and real conversations about where this music is going. Winter Agony was born from resilience and dedication, and everything we put out here reflects that. Come find us there, and bring your curiosity with you.

Frequently asked questions

What innovations made hard rock and metal possible?

Electric guitar pickups, fuzz pedals, distortion, and amplification gave these genres their signature heavy, expressive sound by enabling louder volumes and richer, more complex timbres. Without these tools, the emotional range that defines the genres simply wouldn’t exist.

How does AI affect metal music production?

Deezer reports 75,000 AI tracks uploaded daily, representing 44% of uploads but only 1 to 3% of streams, showing that rapid creation is happening but listeners still seek out human-made music. The debate centers on whether AI-assisted production can carry the emotional authenticity the genre demands.

What’s the difference between naturalistic and hyperreal music production?

Naturalistic production captures organic performance with real dynamics and human feel, while hyperreal production uses technology to push heaviness beyond what any live performance could achieve, often using 50 to 100-plus tracks. Both have their place depending on what emotional effect an artist is going for.

Does innovation threaten emotional storytelling in metal lyrics?

Most evidence, and most of metal’s own history, shows that innovation amplifies storytelling rather than diminishing it, as long as artists keep authentic human experience at the center of their work. The tools serve the story when the artist is genuinely committed to telling a true one.

Recommended

Comments